Stambia

The solution to all your data integrations needs

Stambia

The solution to all your data integrations needs

El componente de Stambia para Snowflake

Snowflake es un almacén de datos (Data Warehouse) que funciona en modo servicio operado desde la nube. En comparación con los sistemas de Data Warehouse tradicionales, Snowflake es más rápido, más flexible y más simple de usar.

El componente de Stambia para Snowflake está construido con la misma filosofía: proporcionar una integración de datos rápida, flexible y simple de usar, en especial con Snowflake. El enfoque unificado de Stambia permite a los usuarios familiarizarse rápidamente con las tareas de integración y de esta manera reducir significativamente el tiempo de comercialización (Time to market).

Diferentes casos de uso del componente de Stambia para Snowflake

Simplicidad y Agilidad

Snowflake es escogido como solución de almacén de datos (Data Warehouse) debido a su simplicidad de uso, su capacidad de escalamiento y sus facilidades para interrogar rápidamente datos.

A continuación los puntos clave:

- No requiere seleccionar, instalar o configurar equipos (hardware)

- No requiere instalar o configurar un programa (software)

- El mantenimiento y la gestión son operados directamente por Snowflake

Estos elementos permiten aportar simplicidad y agilidad a la implementación de un almacén de datos Snowflake de nueva generación. En paralelo, es importante disponer de una capa de integración (de datos) que sea igualmente simple y ágil.

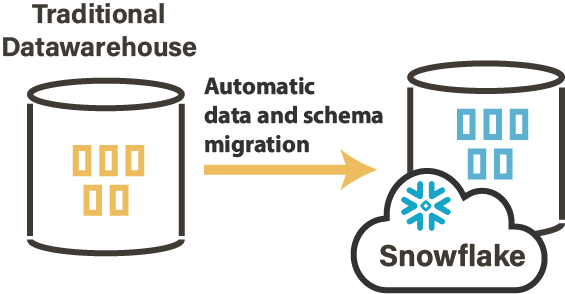

Migrar sus datos en local (On-Premise) hacia Snowflake

La primera fase – nada despreciable – para iniciar un proyecto Snowflake consiste en desplazar los datos en local (On-Premise) existentes hacia un nuevo almacén de datos Snowflake ubicado en la nube.

Un proyecto de migración de datos requiere establecer una orden y una planificación. La volumetría contenida en un almacén de datos es frecuentemente significativa. Los datos no pueden ser migrados de una vez. Hace falta entonces definir un plan de migración y las operaciones que deben efectuarse (filtrado de datos, reconciliación de datos, etc.).

"Todavía en 2019, más de 50% de los proyectos de migración de datos sobrepasarán el presupuesto y los lapsos de tiempo y/o afectarán negativamente la actividad, debido a una estrategia y a una ejecución defectuosas"

source : Gartner

Una migración de datos requiere igualmente automatizar y simplificar numerosas acciones. Se trata de elementos clave para aportar la agilidad necesaria con el fin de tener éxito en esta indispensable primera fase.

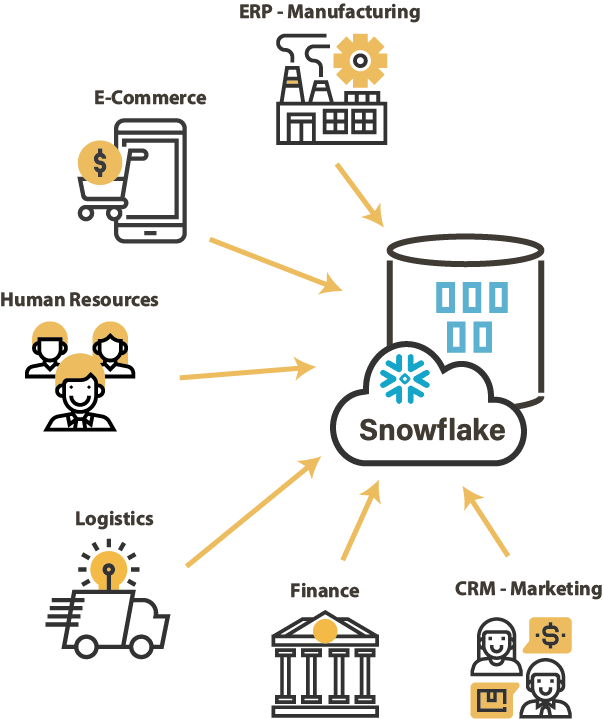

Integrar todos sus datos

Como todo almacén de datos, Snowflake debe ser alimentado por los datos provenientes de los diferentes sistemas, los cuales están basados en tecnologías heterogéneas. Es necesario disponer de una capa de integración de datos que sea capaz de importar estos datos, conservando una coherencia y evitando la multiplicación de las herramientas técnicas de integración.

En el marco de una estrategia de datos a la escala de empresa, con ciclos de cambios de arquitectura acelerados, la capa de integración de datos será la clave para desbloquear los datos de la empresa y tomar decisiones rápidas para reducir los tiempos de puesta en el mercado (Time to market).

Rendimiento y Escalabilidad

Snowflake utiliza la tecnología de procesamientos paralelos masivos (MPP): asigna automáticamente los nodos de cálculo en función de la potencia necesaria para cada "almacén virtual" en el que serán procesadas las consultas. Para ello, Snowflake se basa en un proveedor de computación en la nube o Cloud Computing (Amazon AWS o Microsoft Azure). De esta forma, Snowflake asegura el rendimiento y la escalabilidad con un escalamiento gestionado de manera nativa y transparente para los usuarios de esta solución de Data Warehouse en modo SaaS.

En este contexto, la capa de integración de datos debe saber sacar provecho de este tipo de arquitectura. Para esto, una herramienta basada nativamente en el modo ELT constituye la solución más eficaz y eficiente. Una herramienta ELT saca provecho de las capacidades nativas de Snowflake, tales como la optimización de cargas de trabajo relacionadas con la integración de datos.

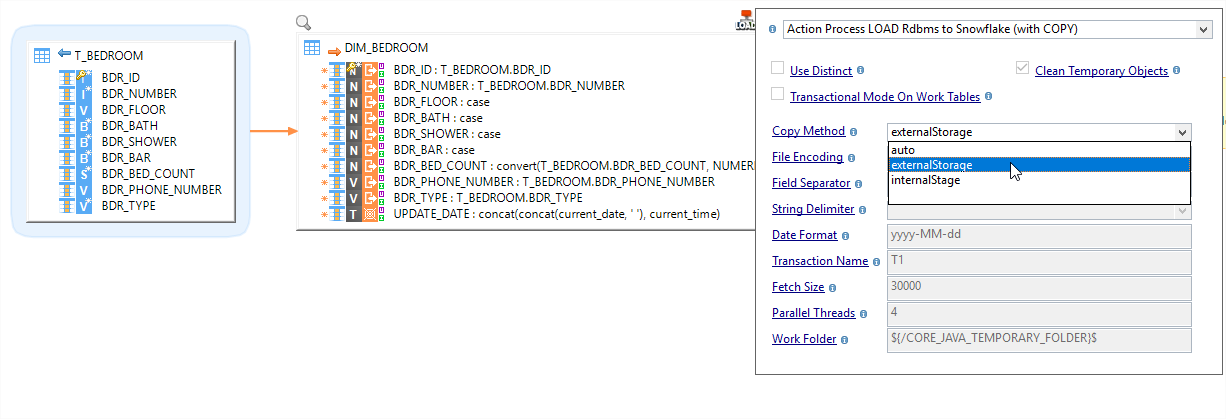

¿Cómo funciona el componente Snowflake?

El componente de Stambia para Snowflake permite contectarse a un almacén de datos Snowflake de manera simple y rápida. De manera nativa, Stambia procesa la integración de datos en Snowflake con el enfoque ELT.

A continuación algunas de sus características:

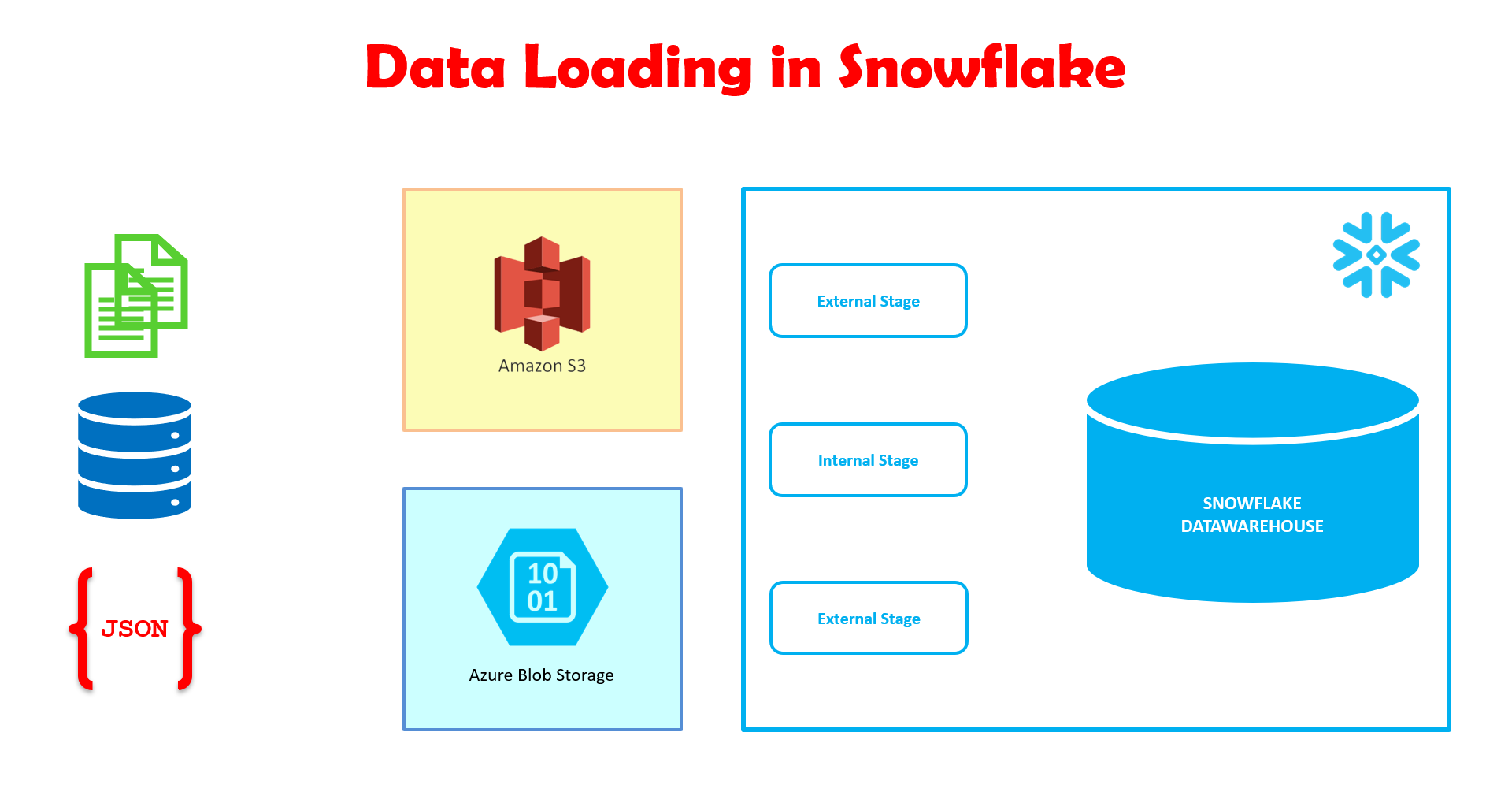

Flexibilidad con cualquier plataforma en la Nube

Snowflake debe ser instalado en una plataforma Cloud (en la Nube) como por ejemplo Amazon AWS o Microsoft Azure. Los datos que provienen de diversos sistemas fuente pueden ser dispuestos en área de stage (ensayo, landing) en espacios dedicados. Snowflake soporta:

- Amazon S3 Bucket as External Stage (or Named External Stage)

- Microsoft Azure BLOB Storage (or Named External Stage)

- Snowflake Internal Stage

El componente de Stambia para Snowflake permite al usuario seleccionar el tipo y la ubicación del espacio de almacenamiento externo directamente a nivel de la metadata. Ganancia en tiempo y productividad: para el área de stage, el mapeo utiliza directamente lo definido a nivel de la metadata.

Un diseño único en torno al Mapping Universal

Para tratar diferentes necesidades de integración, una de las claves de Stambia es el Mapping Universal. Stambia permite diseñar de manera única y visual los mapeos para cualquier tecnología en la fuente de alimentación de Snowflake.

El diseño de un mapeo se hace sencillo gracias a la utilización de plantillas (“Templates”) concebidas especialmente para Snowflake. El usuario se concentra en la reglas de negocio que se deben aplicar durante el desplazamiento de los datos hacia Snowflake, mientras que las plantillas se ocupan de generar y ejecutar los comandos específicos (scripts, SQL) para alimentar a snowflake.

De esta manera, el desarrollador de los flujos de integración gana en productividad. Ya no necesita porgramar todas las etapas redundantes y las etapas intermedias necesarias. Adicionalmente, es muy fácil cambiar el área de stage directamente a nivel del mapeo (Mapping) o de la metadata.

Una migración rápida de sus datos en local (On-premise)

La migración de datos evocada precedentemente es el punto de partida para la implementación de un almacén de datos (Data Warehouse) basado en Snowflake. La plantilla de replicación “Stambia Replicator” adaptada a Snowflake permite migrar los datos únicos o históricos de un almacén de datos en local (On-Premise) hacia Snowflake. Gracias a “Stambia Replicator” el proceso de generación de flujos de integración se automatiza por completo.

Las plantillas de replicación proporcionadas por Stambia aumentan la productividad. Son completamente personalizables y permiten adaptar la migración en función de la estrategia establecida. De esta forma, es posible seleccionar los datos que se desean migrar, efectuar conversiones, conciliaciones o todo tipo de operación específica.

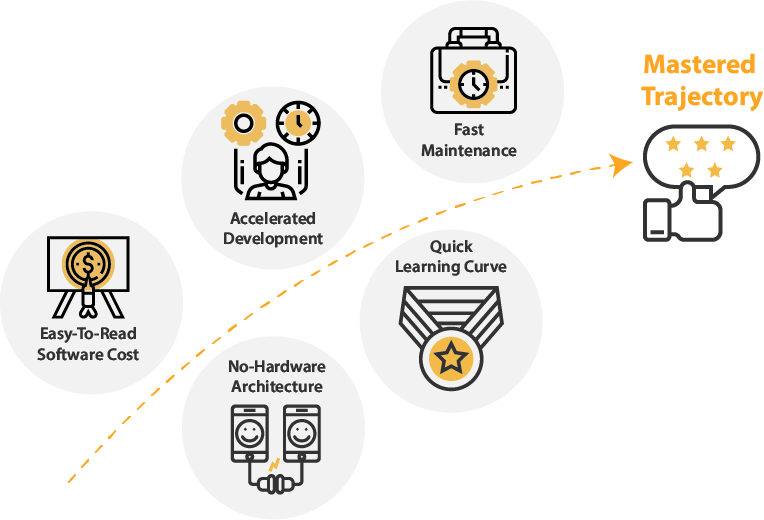

Control sobre la trayectoria y los costos del proyecto

Uno de los factores clave en la selección de una solución de integración de datos – sobretodo para un entorno escalable como Snowflake – es el costo de propiedad (TCO). Con su enfoque ELT, Stambia no requiere una infraestructura dedicada; las ejecuciones no se limitan a un solo entorno. El costo no está basado en el volumen de datos o en una noción de potencia de máquina. Así, Stambia ofrece una comprensión simple y legible del costo de propiedad.

Stambia es una solución unificada que permite tratar todo tipo de proyecto de integración de datos. Gracias a Stambia, ya no es necesario escoger una solución nueva a cada evolución de la arquitectura o por cada nueva necesidad de negocio. Así mismo, los componentes de Stambia son fáciles de comprender y de usar, lo cual le confiere una curva de aprendizaje rápida. ¡Stambia garantiza un perfecto control de la trayectoria de los proyectos que requieren integración de datos!

Especificaciones y prerrequisitos técnicos

| Especificaciones | Descripción |

|---|---|

|

Protocolos |

JDBC, HTTP |

|

Datos estructurados y semiestructurados |

XML, JSON, Avro (próximamente) |

|

Almacenamiento |

Tipo de almacenamiento manejado para almacenar archivos temporales con el fin de optimizar los rendimientos:

|

| Conectividades |

Puede extraer los datos de:

Para saber más, consulte nuestra documentación técnica |

|

Características estándar |

|

| Características avanzadas |

|

| Versión del Designer de Stambia | A partir de Stambia Designer s18.3.1 |

| Versión del Runtime de Stambia | A partir de Stambia Runtime S17.3.0 |

| Notas complementarias |

|

¿Desea saber más?

Consulte nuestros diferentes recursos

¿No ha encontrado lo que deseaba en esta página?

Consulte otro de nuestros recursos

Stambia anuncia su fusión con Semarchy the Intelligent Data Hub™

Stambia Data Integration se convierte en Semarchy xDM Data Integration