Stambia

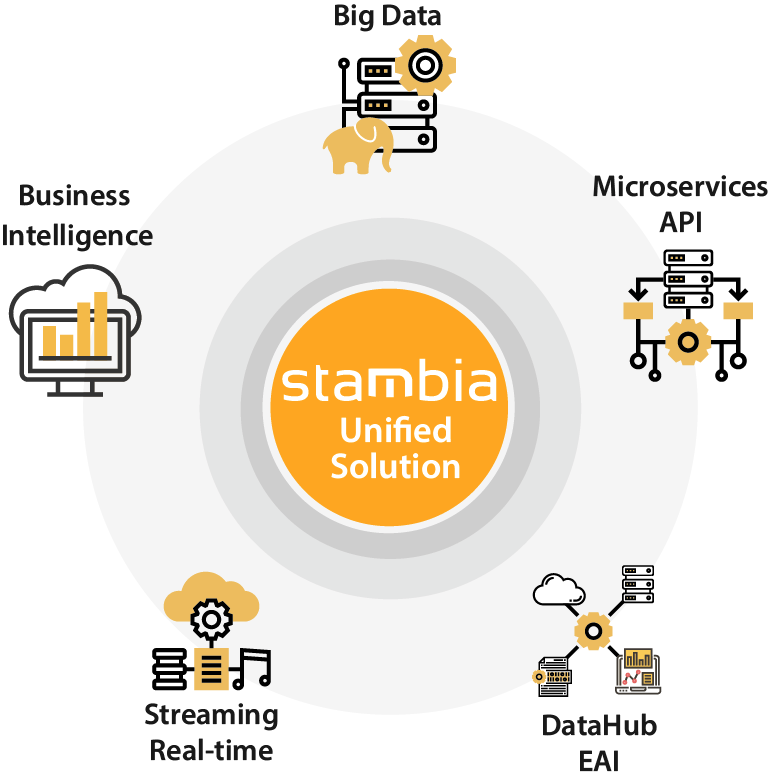

The solution to all your data integrations needs

Stambia

The solution to all your data integrations needs

Powering Business Intelligence systems

(BI, Analytics)

Business Intelligence (BI or Analytics system) used to consist of consolidating past data, to then analyze this data in order to help make decisions on future actions.

With the advent of technologies and the development of instantaneity, the uses have multiplied: we must now act on actions with immediate results.

The consequences:

- Traditional BI data is cross-checked in real time with the operational data used by applications, services, etc. Thus, we went from a consolidation for the following week, a few days, then 24 hours, to consolidation needs in real time.

In other words: from a batch Business Intelligence system to a "BI on the fly". - A silotage of data integration solutions:

- An ETL for BI

- A tool for data pipelines

- An EAI or ESB for traditional data exchange needs

- A framework for APIs / Microservices

In the physical world: with the electronic price tag (electronic shelf label) in a supermarket, you can easily and quickly adjust prices according to traffic, competition, ...

In the e-commerce world: to recover the prices of its competitors and adjust its pricing accordingly

What are the challenges of Business Intelligence (BI) today?

Traditional’ BI confronted to Big Data technology

The emergence of ecosystems like Hadoop has made it possible to solve in a different way the constraints related to volumetry or complexity, in particular, the processing of unstructured data. Constituting a reliable storage system, simple and deemed cheap, it is now possible to set up Datalakes to absorb the endless deluge of data (from IOT / connected objects, social networks, ...).

Data mining has been replaced by Big Data technologies that facilitate the use of Machine Learning (ML) as well as Deep Learning.

The challenge for CIOs is to juggle all these technologies and build a coherent and reliable system that allows, as needed, to move from a descriptive BI to a predictive or even prescriptive BI.

Example: in the marketing field, we are increasingly targeting persons, in relation to a given context, using collected data.

Customer Data-Centric - Growing awareness of companies on the value of data/Data

The relationship to data has changed: a few years ago, data was an appendix, a consequence of an activity, generated by an application. From now on, data is at the heart of the company's activity: it is the source of the business and no longer a resultant to analyze.

Companies become "Data-Centric" and "Data-Driven". The data multiplies and takes on different types and origins. It is then necessary to set up a system of data governance.

We must bring agility and responsiveness while continuing to maintain the same efficiency.

Since the data is at the center, the Information System must be modeled differently.

We become "Customer Data-Centric" and this implies a gradual overhaul of the way of designing an information system.

Using the Cloud’s power for Business Intelligence

“By 2023, 75% of all databases will be on a cloud platform, reducing the DBMS vendor landscape and increasing complexity for data governance and integration.”

Gartner, Jan 2019

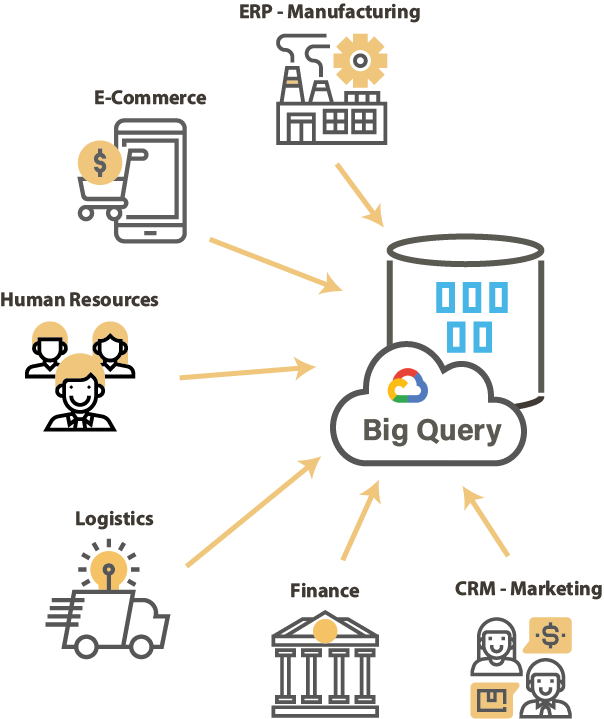

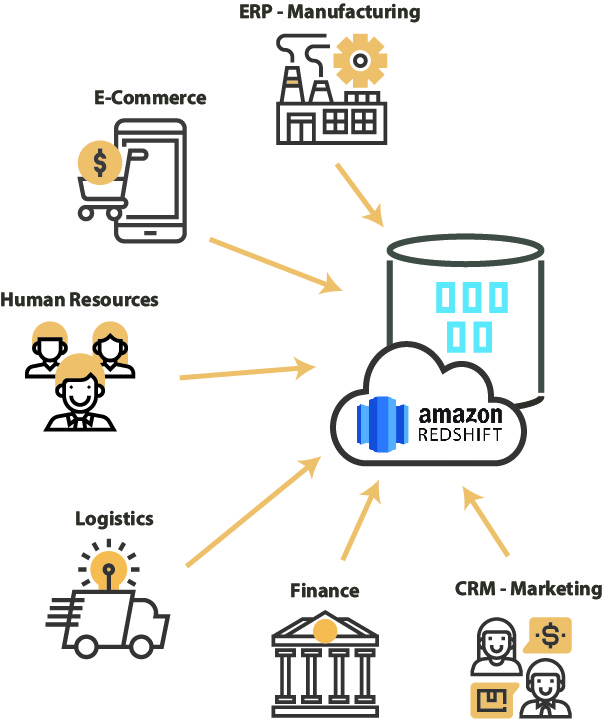

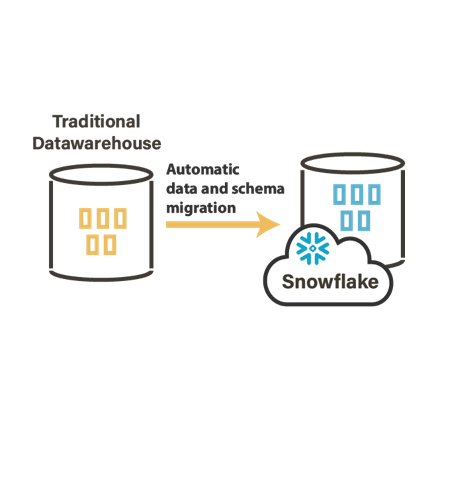

The movement towards the Cloud seems inevitable. In addition to traditional databases, new solutions such as Google BigQuery, Snowflake, Amazon Redshift appear.

These technologies leverage the intrinsic capabilities of the cloud to address the challenges of large volumes of data, and the need for performance in processing that data.

The traditional Business Intelligence system sometimes gets to a point where it is "out of breath": a pricing system that is not adapted to new challenges; obsolete technically, unable to support the exponential load of new needs.

The challenge is to be able to intelligently use each technology according to its capacity while allowing these technologies to coexist and exchange data (feed each other).

Discover some of our Cloud connectors

User Requirement - Switch to Real-Time, Interactive Dataviz and BI

For a long time, the BI report - often institutional - was static, available after hours of calculations, sometimes even published in the morning with an automatic output on the printer of the service concerned. As a result, Monday morning often signaled intense stress and activity in the IT department.

The phenomenon has increased with the proliferation of mobile devices used in the professional setting (tablet, smartphone, ...). It is now necessary to be able to consult and make analyzes in the field. Users live in immediate need and are more demanding on the speed of data processing. Often they also want to intervene at the data level. This is "Self Service BI" / Mobile BI.

Choosing Stambia for BI, 4 criteria of choice

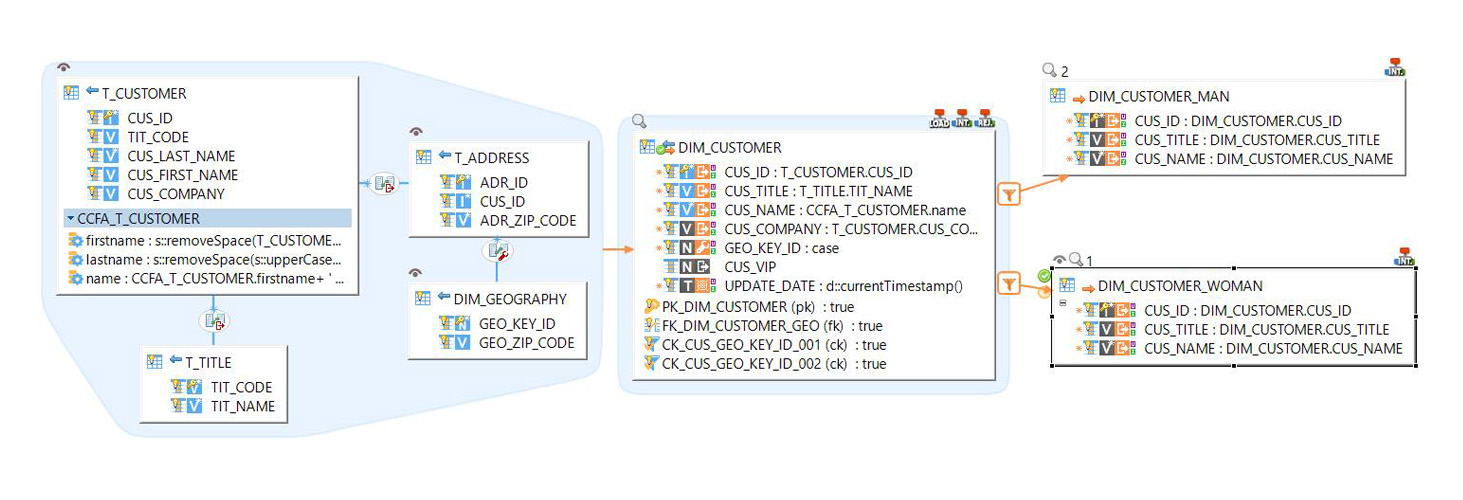

The simplicity of design in a few clicks

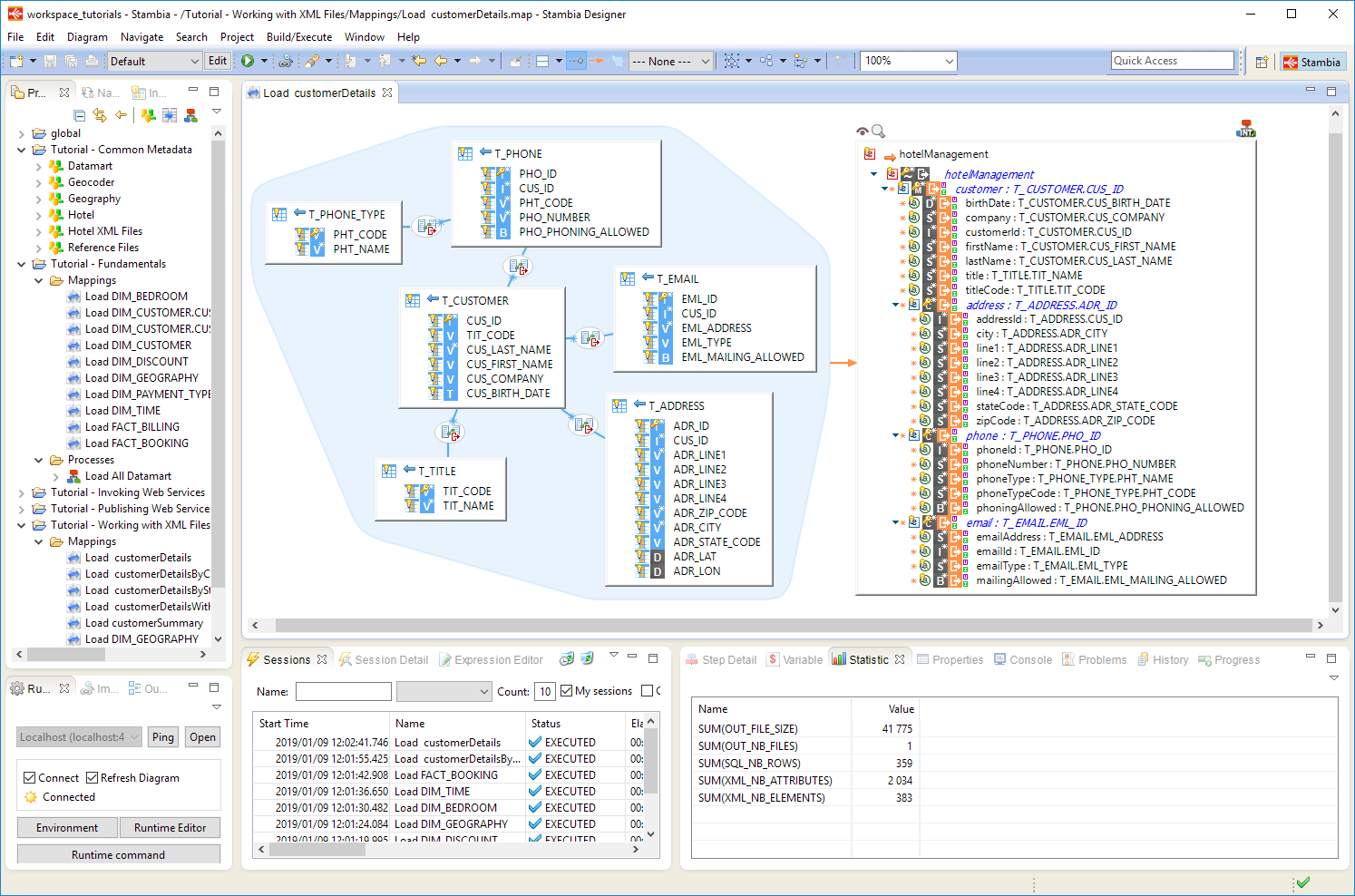

Universal mapping

Stambia simplifies the way you design data integration flows. The notion of universal mapping offers a business-oriented vision that does not require technical competence during the mapping phase (conception of relations between objects). The so-called "top-down" approach allows to focus on the "What" and to let the "How to" be automatically generated by the platform.

Unified management of any type of project

This type of mapping makes it possible to respond to any type of data integration need. This offers the ability to handle, with the same skills, Business Intelligence or Big Data projects, as well as real-time oriented projects (streaming, API, Web Services ...). The learning curve of data integration within organizations is facilitated by the use of a universal vision of data transformation.

BI as a service

Stambia's ability to expose complex Web Services in a matter of seconds helps extend the Business Intelligence capabilities of organizations. Indeed, any BI data can be exposed: aggregated data, detail data or repositories or data dimensions.

This feature does not require a complementary tool, thus guaranteeing an exceptional level of responsiveness at almost no cost.

The performance of the ELT mode

ELT mode as a performance engine

Stambia is a solution that favors the use of transformation delegation, more commonly known as ELT (Extract, Load and Transform).

This tool philosophy provides the best performance.

ELT is also the guarantor of automatic scalability and immediate treatment without additional cost on the side of the data integration solution.

The power of ELT for the Cloud and Big Data

The cloud has become the preferred architecture for IT departments, including platforms for implementing "No-Software" and "No-Hardware" strategies.

The ELT mode provides a lightweight (without a transformation engine) architecture that meets the requirements of cloud initiatives within organizations, ensuring performance and simplicity of architecture.

The logic is the same for Big Data projects. Stambia, through its ELT approach, will take advantage of all the power of Big Data and No SQL platforms without adding complexity to the architecture

Industrialization of flows

Industrialization is a key element of IT projects around data. There are many opportunities for businesses to leverage data with ease and efficiency. On the other hand, once the phases of experimentation and innovation are carried out by the business teams, then comes the industrialization phase.

Stambia is the ideal solution for the industrialization of data flows.

The vision based on templates allows to encapsulate technical know-how and reuse in industrial mode, without the need for users to master the technical details.

Proximity to our clients

Our customers recommend us. For Stambia, this is the greatest reward.

Stambia, before being a technological solution, is a team of experts that are passionate about what they do.

We seek to understand the needs of our users and to offer them the best, thus producing for everyone the expected level of value.

What if you rediscovered with Stambia what it is to have quality software support and a publisher team at your service?

How to accelerate Business Intelligence/Analytics

Intelligent integration modes

- Cancel & replace mode: In this mode the data is deleted and replenished entirely (useful for initiating data or ensuring consistency of some reference data)

- Incremental Mode: In this mode just the latest changes are taken into account. This type of integration is useful for large data (facts). It can be paired with event detection features on sources to optimize flows and ensure fresh data.

- Historically-powered mode, sometimes called Slow Changing Dimensions (SCD). This mode makes it possible to track the changes in the data over time in order to make further analysis of the evolution of the data.

- Automatic feed of Data Vault models. Many models exist for Business Intelligence systems. Star models or Data Vault modeling are examples. Stambia is compatible with these different models and allows automatic feeding of tables and associated dimensions or satellites.

Integration types

Incremental

Cancel & Replace

Slowly Changing Dimensions

Data Vault

Specific / homemade template

The possibility to make the platform evolve

Stambia is an adaptable platform. Templates, technologies and connectors delivered as standard can evolve very quickly to meet specific needs.

Stambia support, the partner network, and very often the users themselves, can create their own data integration logic or optimize the technical components, and then reuse these changes on subsequent projects.

In case of difficulty, Stambia teams are available and can deliver new components or connectors in record time (from a few days to a few weeks), allowing users to operate in agile mode with always a solution at hand.

More than connectors

Stambia offers ready-to-use components that manage the most popular modern platforms, as well as the oldest legacy systems.

Stambia offers extended functionality for cloud platforms like Snowflake, Biq Query, Redshift, or for Big Data platforms.

Bringing traditional BI together with new generation platforms, or simply migrating from one to the other, has never been so easy.

Integrated right to privacy

Many components are available as standard in the solution, without the need for an additional tool. This is the case for tools for the data privacy protection.

This component, integrated naturally in the development tool (Designer), will allow us to manage many constraints imposed by GDPR projects, including everything related to the anonymization of data and the automation of tasks and alerts.

Power of metadata for data lineage and governance

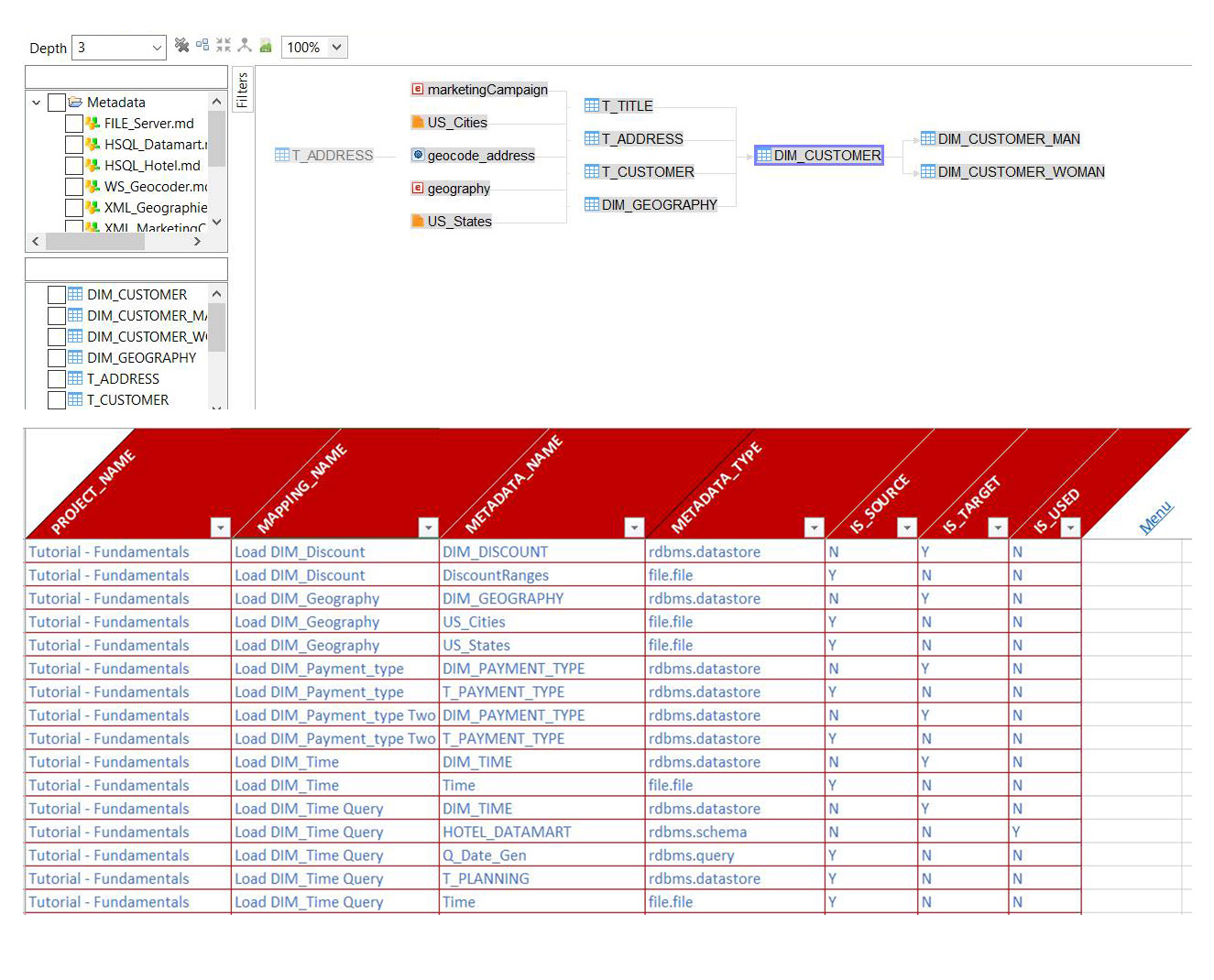

Thanks to its universal mapping that simplifies the vision of the development of data flows, Stambia offers simple and effective data lineage functionalities.

Stambia metadata is open (stored in XML) and can easily be exported to Information Systems governance solutions or simply exported to tables for BI analysis.

A fine and complete vision of the flow of data from the sources to the targets, is available to the decision-makers to answer the data traceability issues.

Technical specifications

| Spécification | Description |

|---|---|

|

Protocol |

HTTP REST / SOAP |

|

Output format |

XML, JSON + all specific format |

| Connectivity |

To find out more, consult the technical documentation |

|

Description |

|

|

Execution |

|

| Stambia Designer Version | From Stambia Designer s18.2.0 |

| Runtime Stambia Version | From Stambia Runtime S17.3.0 |

| Complementary notes |

|

Would you like to learn more on collecting and analyzing your data?

Consult our resources

Didn’t find what you were looking for on this page?

Consult our other resources:

Semarchy has acquired Stambia

Stambia becomes Semarchy xDI Data Integration